The era of “counting the fingers” is officially over. By early 2026, generative models like Flux-Gen and Midjourney v8 have largely solved the anatomical glitches that once made AI images easy to spot. Today, you can’t just look for a sixth finger or a blurry hand to debunk a fake. As synthetic media becomes a primary tool for both creative expression and sophisticated digital scams, the “uncanny valley” has moved from the foreground into the subtle physics of the background.

Featured Snippet: To tell if a photo is AI-generated in 2026, look for “semantic logic failures” rather than anatomical glitches. Check for lighting that contradicts the environment, reflections that don’t match the scene, and nonsensical background text. For a definitive check, use C2PA-compliant tools to verify the “Content Credentials” metadata embedded in the file.

The Physics Fail: Why Lighting is the New Fingerprint

Here’s the thing: AI models are excellent at mimicry but terrible at physics. While an AI can render a perfect face, it often struggles to understand how light from a specific source should interact with every object in a complex scene. If you see a portrait where the sun is clearly setting behind the subject, but their face is brightly lit from the front without a visible flash or reflector, you’re likely looking at a composite.

What most people miss is the “global illumination” error. Shadows should follow a consistent path. In AI images, you might find a person’s shadow falling to the left while a nearby tree casts a shadow to the right.

Caption: Image: Example of contradictory lighting and shadow direction.

Semantic Logic: The Uncanny Valley of Context

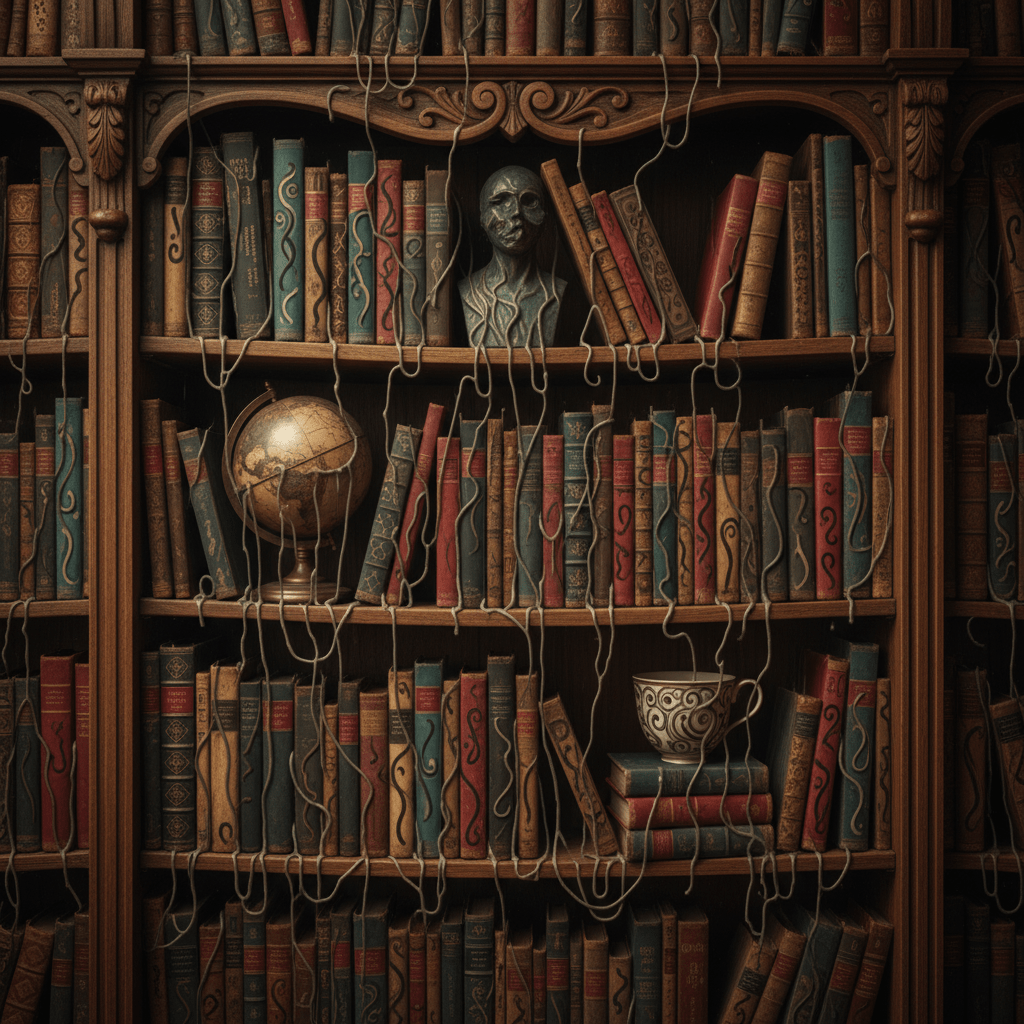

Let’s be honest—AI is still a bit of a “surface-level” thinker. It knows what a bookshelf looks like, but it doesn’t know what a book is. This leads to semantic logic failures. When you zoom into the background of a suspected AI image, look at the text on signs, labels, or book spines.

Even in 2026, AI often produces “gibberish text”—characters that look like a real alphabet at a distance but dissolve into alien-looking symbols up close. Furthermore, look for “merging” objects. A real photo will have distinct boundaries between a coffee cup and the table it sits on. In a synthetic image, the handle of the cup might subtly melt into the person’s thumb or the wood grain of the table.

Caption: Image: Background “gibberish” and object merging are key AI indicators.

Technical Verification: The Rise of Content Credentials

The short answer to the trust crisis is the C2PA standard. In response to the EU AI Act, most cameras and AI tools now sign images with “Content Credentials.” This is a digital nutrition label that tells you exactly where an image came from and how it was edited.

Verification Method | Reliability | Best For |

|---|---|---|

Visual Inspection | Moderate | Quick social media browsing |

C2PA Metadata | High | Fact-checking and legal evidence |

AI Detection Software | Low | Low-quality, un-optimized fakes |

Reverse Image Search | Moderate | Finding original sources of stolen art |

Caption: Diagram: The professional workflow for verifying digital authenticity.

Caption: Diagram: The professional workflow for verifying digital authenticity.

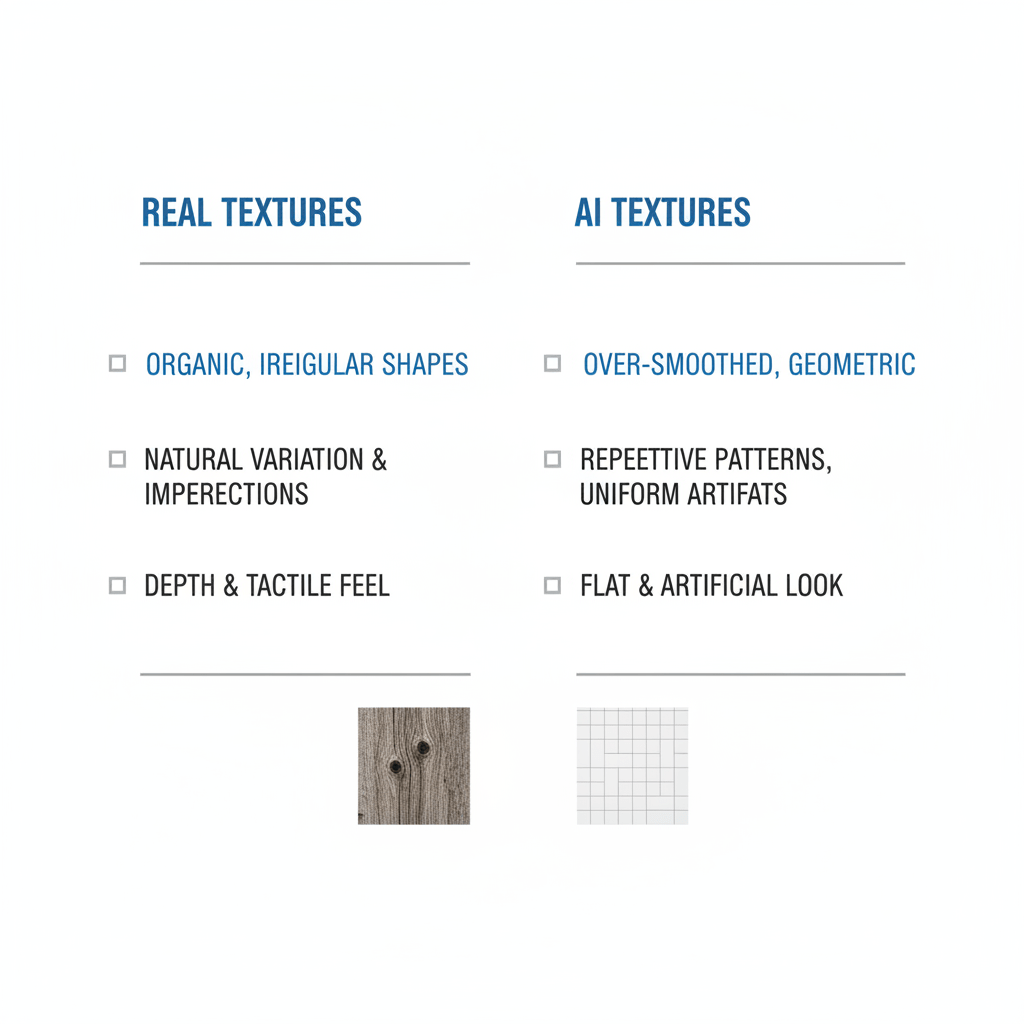

The Unseen Clues: Textures and Reflections

AI struggles with the complex math of reflections. Look closely at sunglasses, windows, or even the pupils of a subject’s eyes. In a real photo, the reflection will perfectly match the environment in front of the subject. In an AI image, the reflection is often a generic “indoor” or “outdoor” scene that has nothing to do with where the photo was supposedly taken.

Caption: Diagram: Visualizing the “Physics Fail” in textures and reflections.

Trust, but Verify: Using Your Forensic Toolkit

What we are witnessing is a permanent shift in digital literacy. We can no longer assume that seeing is believing. However, by combining your own “semantic” intuition with new metadata tools, you can navigate the 2026 internet with confidence.

Caption: Image: Using modern verification tools to stay safe online.

Don’t let your friends and family be the next victims of a deepfake scam.

The era of “counting the fingers” is officially over. By early 2026, generative models like Flux-Gen and Midjourney v8 have largely solved the anatomical glitches that once made AI images easy to spot. Today, you can’t just look for a sixth finger or a blurry hand to debunk a fake. As synthetic media becomes a primary tool for both creative expression and sophisticated digital scams, the “uncanny valley” has moved from the foreground into the subtle physics of the background.

Featured Snippet: To tell if a photo is AI-generated in 2026, look for “semantic logic failures” rather than anatomical glitches. Check for lighting that contradicts the environment, reflections that don’t match the scene, and nonsensical background text. For a definitive check, use C2PA-compliant tools to verify the “Content Credentials” metadata embedded in the file.

The Physics Fail: Why Lighting is the New Fingerprint

Here’s the thing: AI models are excellent at mimicry but terrible at physics. While an AI can render a perfect face, it often struggles to understand how light from a specific source should interact with every object in a complex scene. If you see a portrait where the sun is clearly setting behind the subject, but their face is brightly lit from the front without a visible flash or reflector, you’re likely looking at a composite.

What most people miss is the “global illumination” error. Shadows should follow a consistent path. In AI images, you might find a person’s shadow falling to the left while a nearby tree casts a shadow to the right.

Caption: Image: Example of contradictory lighting and shadow direction.

Semantic Logic: The Uncanny Valley of Context

Let’s be honest—AI is still a bit of a “surface-level” thinker. It knows what a bookshelf looks like, but it doesn’t know what a book is. This leads to semantic logic failures. When you zoom into the background of a suspected AI image, look at the text on signs, labels, or book spines.

Even in 2026, AI often produces “gibberish text”—characters that look like a real alphabet at a distance but dissolve into alien-looking symbols up close. Furthermore, look for “merging” objects. A real photo will have distinct boundaries between a coffee cup and the table it sits on. In a synthetic image, the handle of the cup might subtly melt into the person’s thumb or the wood grain of the table.

Caption: Image: Background “gibberish” and object merging are key AI indicators.

Technical Verification: The Rise of Content Credentials

The short answer to the trust crisis is the C2PA standard. In response to the EU AI Act, most cameras and AI tools now sign images with “Content Credentials.” This is a digital nutrition label that tells you exactly where an image came from and how it was edited.

Verification Method | Reliability | Best For |

|---|---|---|

Visual Inspection | Moderate | Quick social media browsing |

C2PA Metadata | High | Fact-checking and legal evidence |

AI Detection Software | Low | Low-quality, un-optimized fakes |

Reverse Image Search | Moderate | Finding original sources of stolen art |

Caption: Diagram: The professional workflow for verifying digital authenticity.

Caption: Diagram: The professional workflow for verifying digital authenticity.

The Unseen Clues: Textures and Reflections

AI struggles with the complex math of reflections. Look closely at sunglasses, windows, or even the pupils of a subject’s eyes. In a real photo, the reflection will perfectly match the environment in front of the subject. In an AI image, the reflection is often a generic “indoor” or “outdoor” scene that has nothing to do with where the photo was supposedly taken.

Caption: Diagram: Visualizing the “Physics Fail” in textures and reflections.

Trust, but Verify: Using Your Forensic Toolkit

What we are witnessing is a permanent shift in digital literacy. We can no longer assume that seeing is believing. However, by combining your own “semantic” intuition with new metadata tools, you can navigate the 2026 internet with confidence.

Caption: Image: Using modern verification tools to stay safe online.

Don’t let your friends and family be the next victims of a deepfake scam.